For the past several years, insider risk management has focused almost entirely on people: employees moving data they shouldn’t, contractors accessing systems beyond their scope, and departing team members taking files on their way out. Those risks haven’t gone away. But the definition of “insider” has expanded in ways most security programs haven’t caught up with.

Today, AI tools and AI agents operate inside your environment with the same access, and sometimes more, as your employees. They read documents, query databases, move data between systems, and take actions on behalf of users. It approved some. Many were not. According to Gartner®, 32% of IT workers using generative AI tools at work say they keep it hidden, hindering discovery from cybersecurity teams.1 That number only accounts for the human side. When you add the growing ecosystem of AI agents acting autonomously across SaaS applications, identity systems, and cloud infrastructure, the blind spots multiply.

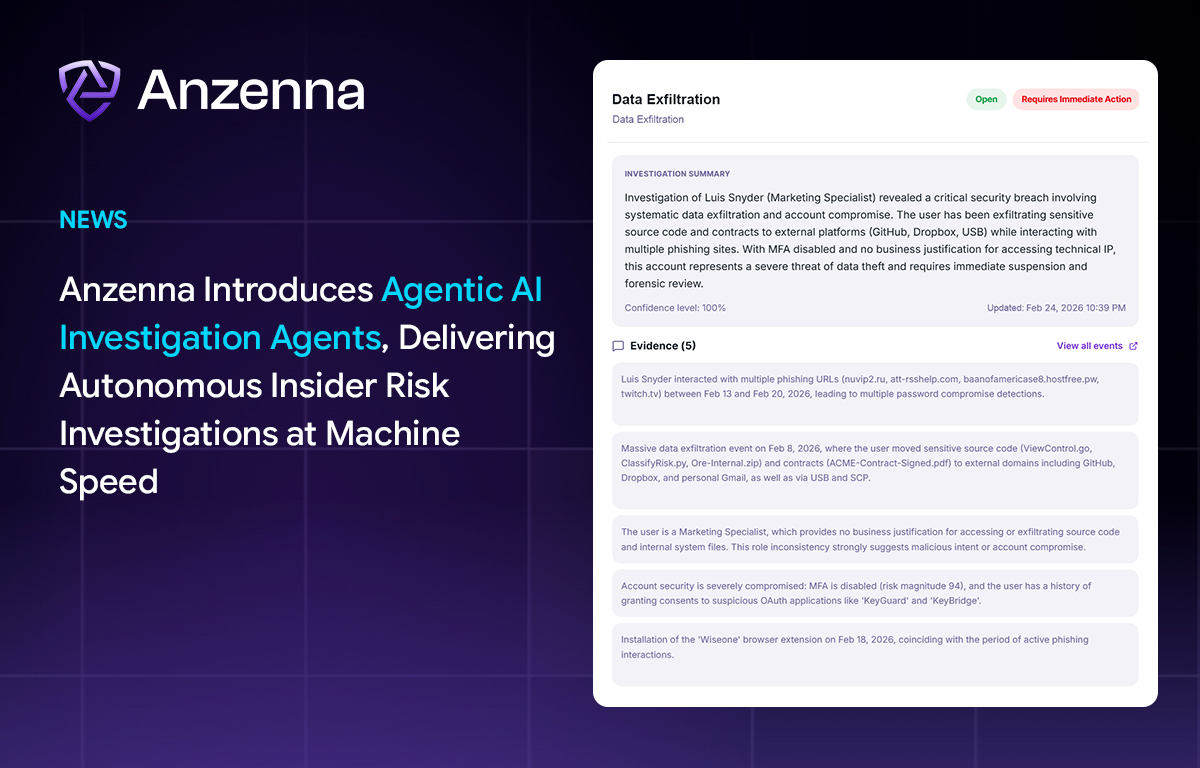

This is the problem we’ve been building toward at Anzenna, and it’s why we’re introducing Agentic AI Investigation Agents today.

Why Insider Risk Investigations Are the Bottleneck in Modern SOCs

Talk to any SOC analyst or insider risk investigator, and you’ll hear the same thing: the alerts aren’t the problem. The investigation is. When a risk signal fires, an analyst has to manually pull logs from an identity provider, cross-reference activity in SaaS applications, check endpoint telemetry, review DLP alerts, and piece together a timeline of what actually happened. That process takes hours. Sometimes days.

The math doesn’t work. Alert volumes are going up. The number of data sources to check is going up. The complexity of what constitutes an “insider” is going up. But investigation capacity stays flat. Hiring more analysts isn’t a realistic answer for most teams. The result is that investigations get delayed, deprioritized, or never started at all. That’s how real threats slip through.

How Agentic AI Investigation Agents Automate Insider Threat Detection and Response

Anzenna’s Investigation Agents handle the labor-intensive parts of an insider risk investigation autonomously. When a risk signal is detected, whether it originates from a human, an AI tool, or an AI agent, the Investigation Agent gets to work: collecting evidence across 130+ integrated enterprise applications, correlating behavioral patterns across platforms, applying role-based context and historical baselines, and assembling a complete case file with findings and recommended next steps.

The output isn’t a dashboard or a score. It’s an investigation case file that an analyst can review, validate, and act on. Every step of the agent’s reasoning is visible and auditable. There’s no black box.

One of our customers, a Director of Risk and Compliance, described the impact: “Anzenna AI Investigations has reduced hours and hours of data stitching and swivel-chair research jumping from tool to tool.” That’s exactly the problem we set out to solve. Investigators shouldn’t spend most of their day collecting data. They should spend it making decisions.

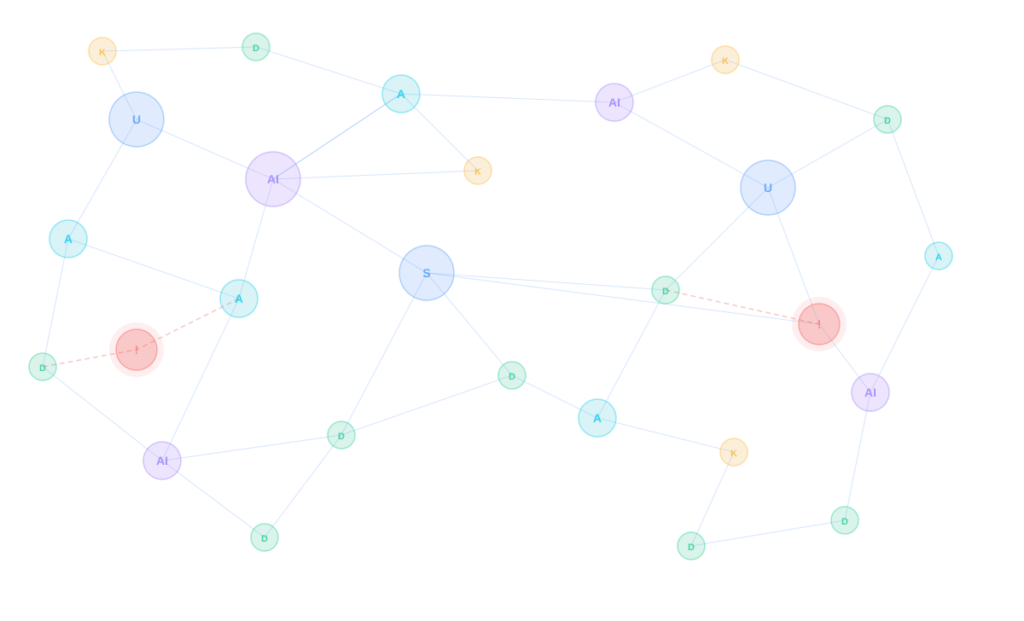

How AI Security Context Graphs Power Insider Risk Investigations

A security AI context knowledge graph is a structured representation of the relationships between users, devices, applications, data, identities, and behaviors across an organization. Unlike flat log data or siloed alerts, a knowledge graph connects these entities so that each event can be understood in the context of everything around it: who the user is, what role they hold, what systems they normally access, what data they typically touch, and how their current behavior compares to that baseline.

Why Knowledge Graphs Matter for Insider Threat Investigations

Traditional insider risk tools analyze events in isolation. An analyst sees that a user downloaded 500 files from SharePoint but has no immediate way to know whether that user routinely handles large datasets as part of their job or whether this is a one-time event. Answering that question requires pulling data from multiple systems and manually stitching it together.

AI Security Context Graphs eliminate that manual step. By maintaining a continuously updated map of relationships between users, assets, permissions, and behaviors, the graph provides instant context for any event. When an Investigation Agent receives a risk signal, it queries the knowledge graph to understand the full picture: the user’s role, their normal patterns, what applications and data they typically interact with, and how the flagged activity compares to both their own history and the behavior of peers in similar roles.

How Anzenna Uses Knowledge Graphs to Provide Investigation Context

Anzenna’s knowledge graph is built from data ingested across 130+ enterprise integrations, including identity providers, SaaS applications, endpoint security tools, cloud infrastructure, email systems, and developer platforms. The graph continuously maps relationships: which users have access to which applications, which data flows between systems, which AI tools and AI agents are active in the environment, and who is responsible for each.

This graph serves as the foundation for Investigation Agents. Rather than starting each investigation from scratch by querying individual systems, agents query the knowledge graph to immediately understand who is involved, what they have access to, what their normal behavior looks like, and what has changed. That context allows Investigation Agents to distinguish between a sales director legitimately exporting CRM data for a quarterly review and the same action performed by someone who has just given notice.

The practical effect is that investigations that previously required hours of manual data gathering and cross-referencing can be completed in minutes. The knowledge graph provides the “why” behind every “what,” turning raw alerts into contextualized findings that analysts can act on with confidence.

Why Insider Risk Management Must Cover AI Agents and AI Tools

Most insider risk programs were designed around a single assumption: the “insider” is a person. That assumption made sense when your environment was people using approved tools on managed devices. It doesn’t hold anymore.

AI tools are being adopted across organizations at a pace that outstrips IT’s ability to catalog them, let alone govern them. Employees connect AI assistants to company data without going through procurement. Developers build AI coding agents that have broad access to repositories. Business teams deploy AI agents that pull from CRMs, HR systems, and financial platforms to automate workflows.

Each of these represents an insider risk vector. Not because technology is inherently dangerous, but because it operates within your environment, has access to sensitive data, and can take actions. The risk profile of an AI agent with read/write access to your Salesforce instance is fundamentally different from a traditional phishing email. It needs to be treated that way.

At Anzenna, we’ve built our platform around this expanded view of insider risk. We don’t treat AI threats as a separate category bolted onto an existing product. Threats from AI tools, AI agents, and humans are all managed in one place, with the same investigation, correlation, and remediation workflows. That’s the only way it scales.

What Automated Insider Risk Investigations Mean for Security Operations

Investigation Agents don’t replace your analysts. They give your analysts back the hours they’re currently spending on manual data collection and correlation. That time can go toward the work that actually requires human judgment: making decisions about remediation, communicating with business stakeholders, and refining policies based on what they’re seeing.

Because Investigation Agents produce full case files with transparent reasoning chains, the output is useful beyond the SOC. Compliance teams get auditable records. Executives get evidence they can bring to the board. Legal teams get documentation that holds up to scrutiny. The knowledge graph provides a single source of truth, making every investigation reproducible and defensible.

Agentless Architecture and Forward-Deployed Engineering

Most insider risk platforms require months of deployment work: installing agents on endpoints, configuring collectors, tuning rules, and waiting for enough data to build baselines. That timeline is a problem when threats are moving now, and security teams are already short-staffed.

Anzenna takes a different approach. The platform is fully agentless and cloud-native. There is no software to install on endpoints, no infrastructure to stand up, and no lengthy integration cycles. Organizations connect their existing identity providers, SaaS applications, and security tools through API-based integrations, and Anzenna begins ingesting data and building its knowledge graph immediately. Typical deployment time is 30 minutes from start to first visibility.

The Forward-Deployed Engineering Model

Fast deployment is only useful if it leads to fast results. That’s why Anzenna operates a forward-deployed engineering model. Instead of handing customers a product and a support ticket queue, Anzenna assigns dedicated engineers who work directly alongside each customer’s security team during onboarding and beyond.

These forward-deployed engineers help configure integrations, tailor investigation workflows to each organization’s risk policies, and ensure the knowledge graph accurately reflects the customer’s environment. They stay engaged after deployment, working with security teams to refine detection logic, tune remediation actions, and adapt the platform as the organization’s tooling and risk landscape evolves.

The result is that customers aren’t just buying software. They’re getting a team that understands their environment and is invested in their outcomes. That model is how we’ve been able to deliver 40% faster threat resolution at customer deployments, and it’s core to how we think about the relationship between our product and the people who use it.

See Agentic AI Investigation Agents Live at RSA Conference 2026

Investigation Agents are the latest step in what we’ve been building since Anzenna’s founding: an insider risk management platform that reflects how organizations actually operate today. People, AI tools, and AI agents all operate within the same environment, creating risk and requiring understanding and governance.

We’ll be at RSA Conference 2026 in San Francisco, showing Investigation Agents live. If you’re there, join us at our After Party on Tuesday, March 24 from 5:00 PM to 9:00 PM. I’d welcome the chance to talk through how your team is thinking about insider risk in the age of AI.

Investigation Agents are available now. You can learn more at www.anzenna.ai or see a product demo.

1 Gartner, Cybersecurity Trend: Agentic AI Demands Program Oversight, by Jeremy D’Hoinne and Craig Porter, January 2026. Gartner is a trademark of Gartner, Inc. and/or its affiliates.